AI (especially generative AI, large language models) and robotics (currently humanoid robots) are ushering in a new era – humanoid AI [1].

Humanoid AI is emerging as the next major advancement in AI and robotics, paving a way for general humanoid intelligence, artificial general intelligence, and artificial narrow intelligence.

Humanoid AI cultivates a new landscape and age of human-like to human-level machine, mind, humanity, and intelligence.

Humanoid AI aims for real-time, live, situated, multimodal, interactive human-like (emotional, affective, with partial humanity) humanoid robots – driven by humanity modeling [2], generative AI, LLMs, and LMMs, and integrating AI technologies.

Humanoid AI: Synergizing humanity, generative and human-level AI into humanoids

In the approximately 60-year journey of humanoid robots, humanoid robots usher in a new era – AI humanoids, AI-powered humanoid robotics. AI humanoids synergize the advancements in large language models (LLMs), large multimodal models (LMMs), generative AI, and human-level AI with humanoid robotics, omniverse, and decentralized AI, transitioning from human-looking to humane humanoids and fostering human-like robotics, a new area of AI: humanoid AI, which also integrates partial humanity into humanlike to human-level humanoids.

Humanoid AI has emerged into a human-AI-robotics-web-integrative ecosystem, revolutionizing the landscape of the intelligent digital economy, societies, and cultures. While only a limited number of humanoids are currently empowered by LLMs or driven by generative AI, humanoid AI is emerging and driving fast-paced development of real-time, interactive, and humane humanoid, with revolutionary advancements and possibilities:

- Transitioning from traditional task-specific hand engineering and programming methodologies to semi-task-specific, task-agnostic, or even open-task applications; fostering greater versatility and flexibility.

- Evolving from predefined and rule-driven behaviors to online, real-time, and learning-driven

task execution; continuously improving performance, adaptability, real-world feedback, and experiences. - Moving away from individual robot-centric approaches towards multi-robot, multi-task, multiparty, and process-oriented operations, control, planning, and task execution; enhancing efficiency and scalability in complex environments.

Evolving landscape of humanoid AI: Human-looking to humane and human-like humanoids

Humanoid robots possess human structures with human appearance through assembling human-size and human-looking body parts, which undertake human senses, human behaviors, human functions, human humanity, and human intelligence. Fig. 1 illustrates the humanoid evolution: from human-looking to humane and human-like paradigm shift.

Fig. 1. Evolution landscape of human-looking to humane, human-like and human-level humanoid robots and AI [1].

Taxonomy of AI robotics and humanoid AI

The taxonomy of AI humanoid robots and humanoid AI reflects the interaction and synergy between robotics and AI and between intelligence systems and human systems. Fig. 2 shows a taxonomy of AI robots and AI humanoid robots. AI humanoids replicate, synthesize and meta-synthesize human systems and intelligence systems. This expands, upscales and transforms robotics toward a spectrum of humanoid functions. A family of AI humanoid functions emerge, such as humanoid interaction, humanoid collaboration, humanoid planning, humanoid navigation, humanoid manipulation, humanoid perception, humanoid learning, humanoid control, humanoid cognition, humanoid emotion, and humanoid humanity.

Fig. 2. The taxonomy of humanoid AI: Interactions and synergy between robotics, AI, intelligence and human systems [1].

Humanoid AI with humanity modeling

AI humanoids undertake real-time, situated, and interactive mind-to-action, perception-to-behavior, and vision-to-emotion transformations, achieving an unprecedented degree of replicating human characteristics and intelligence. Realizing this vision requires systematic humanity modeling, ensuring that AI humanoids are shaped, guided, and governed by robust frameworks of AI humanity and robotic humanity.

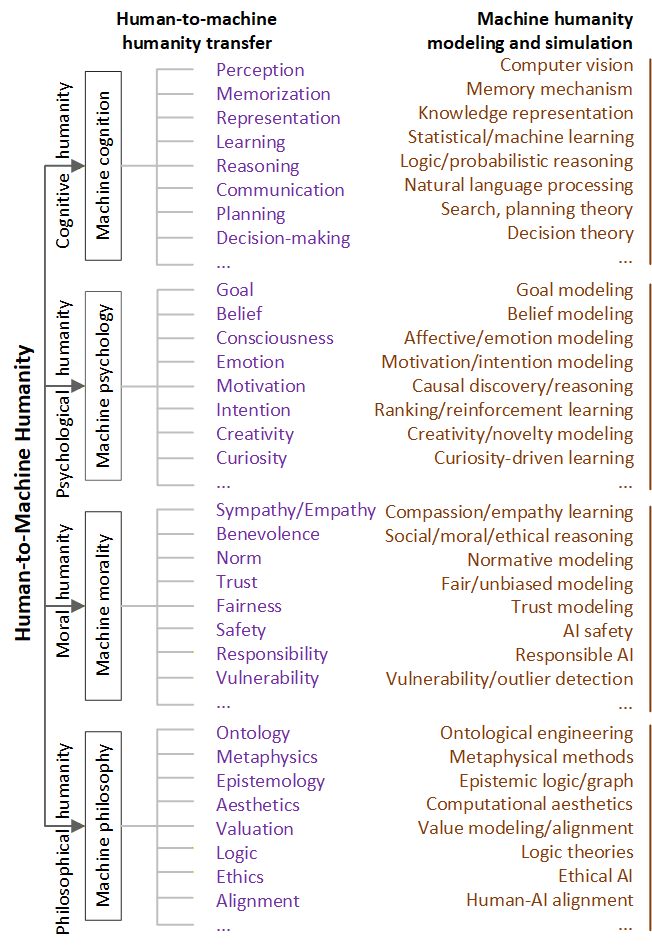

Humanity refers to human qualities, particularly the positive biological, cognitive, psychological, moral, social, and philosophical characteristics, dimensions, capacities, and purposes that define human beings. Our focus is on machine-compatible humanity (i.e., not the full spectrum of humaneness) and on how to model, replicate, verify, and align these qualities with humanoids, with the aim of creating human-comfortable, safe, and responsible humane humanoids [2]. Humanity modeling is essential for creating humane machines, facilitating cognitive, psychological, moral, and philosophical machine-human interaction, cooperation, collaboration, coexistence, and symbiosis. Fig. 3. shows human-to-machine humanity with corresponding general humanity modeling.

Fig. 3. Human-to-machine humanity with corresponding general humanity modeling [2]

Enabling humane and human-level humanoids

Many techniques are required to enable humane and human-level robots, such as mechanical, material, biomedical, electrical and anthropomorphic designs. Intelligent techniques to enable humane and human-level robots include: (1) humanizing robots toward humane and human-level features, structures, functions and moral traits; (2) digitizing human features in robotics; and (3) intelligentizing robots with human intelligence in complex decentralized, distributed, or even virtualized applications and environments. Essential studies include:

- building mind-to-action mindful and actionable humanoids

- supporting omnimodal perception-to-behavior humanoid modeling

- advancing human-like humanoids with human-level AI

- hybridizing humanoids with humanoid animation, imitation, digital twins, metaverse and mixed reality

- hybridizing humanoid with decentralized AI for decentralized humanoids: on-humanoid, edge and cloud humanoid systems

Fig. 4 illustrates a framework of decentralized humanoid AI systems.

Fig. 4. Decentralized humanoids: On-humanoid, edge and cloud humanoid AI framework, synergizing humans, humanoids, edge and cloud devices, algorithms and services including LLMs [2].

Use Cases

UGotMe: An Embodied System for Affective Human-Robot Interaction [3]

Abstract

Equipping humanoid robots with the capability to understand emotional states of human interactants and express emotions appropriately according to situations is essential for affective human-robot interaction. However, enabling current vision-aware multimodal emotion recognition models for affective human-robot interaction in the real-world raises embodiment challenges: addressing the environmental noise issue and meeting real-time requirements. First, in multiparty conversation scenarios, the noises inherited in the visual observation of the robot, which may come from either 1) distracting objects in the scene or 2) inactive speakers appearing in the field of view of the robot, hinder the models from extracting emotional cues from vision inputs. Secondly, realtime response, a desired feature for an interactive system, is also challenging to achieve. To tackle both challenges, we introduce an affective human-robot interaction system called UGotMe designed specifically for multiparty conversations. Two denoising strategies are proposed and incorporated into the system to solve the first issue. Specifically, to filter out distracting objects in the scene, we propose extracting face images of the speakers from the raw images and introduce a customized active face extraction strategy to rule out inactive speakers. As for the second issue, we employ efficient data transmission from the robot to the local server to improve realtime response capability. We deploy UGotMe on a human robot named Ameca to validate its real-time inference capabilities in practical scenarios.

Video Presentation

References

- [1] Longbing Cao. Humanoid Robots and Humanoid AI: Review, Perspectives and Directions, ACM Computing Surveys, Volume 58, Issue 4, Article No.: 97, 1-37, 2025. arXiv, 19 March, 2024.

- [2] Longbing Cao. Humanoid AI with Humanity Modeling: Bridging Humans and Virtual–Physical Humanoids, 1-39, 2025.

- [3] Peizhen Li, Longbing Cao, Xiao-Ming Wu, Xiaohan Yu, Runze Yang. UGotMe: An Embodied System for Affective Human-Robot Interaction, https://arxiv.org/abs/2410.18373, ICRA, 2025.

- Peizhen Li, Longbing Cao, Xiao-Ming Wu, and Yang Zhang. VividFace: Real-Time and Realistic Facial Expression Shadowing for Humanoid Robots, https://arxiv.org/abs/2602.07506, ICRA, 2026.

- F Cenacchi, L Cao, D Richards. Humanoid Robots in Early Disease Detection: Challenges, Techniques and Opportunities, 1-44, 2025.

- X Zhang and L Cao. Facial Expression and Behavior Modeling from Digital Avatars to Expressive Humanoid Robots: A Review, 2025.

- Xu Zhang, Longbing Cao, Runze Yang, Zhangkai Wu: Learning Physiology-Informed Vocal Spectrotemporal Representations for Speech Emotion Recognition. https://arxiv.org/pdf/2602.13259 (2026)

- Peizhen Li, Longbing Cao, Xiao-Ming Wu, Runze Yang, Xiaohan Yu. X2C: A Dataset Featuring Nuanced Facial Expressions for Realistic Humanoid Imitation, https://arxiv.org/abs/2505.11146

- Filippo Cenacchi, Deborah Richards and Longbing Cao. Multi-Disorder Mental Health AI Diagnosis: Tri-Modal, Severity-Aware Fusion for Depression and PTSD